This week, Mark Zuckerberg published the results of his 2016 project – building a real world Jarvis. This AI-based personal assistant gets commands from Zuck, and in turn, operates certain parts of his home. Here’s a video he posted (with a special voice actor) showing Jarvis’ functionalities.

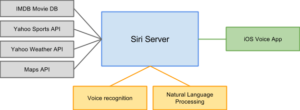

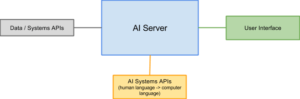

In a Facebook post, Mark described how his modern butler works with the following chart:

One of the things I immediately noticed about this diagram is that Jarvis gains most of his power from connecting through APIs. As you probably know, an API is an applied programming interface that allows one piece of software to talk to another.

The fundamental idea of artificial intelligence is not new. A robot butler has been in the public consciousness since the Jetsons first hired Alice or Ironman was first assisted by Jarvis. What is new, however, is the sheer amount of data and actions available through APIs, and the quality of voice, image and text processing APIs out there. And those APIs are what makes Mark Zuckerberg’s project, and other artificial intelligence projects so powerful.

Most of Jarvis’ core functionalities are not from building original functionality from scratch, but from connecting to APIs. These three types of APIs are the real fuel for the future of artificial intelligence.

1) User Interface APIs: Tell Jarvis a command

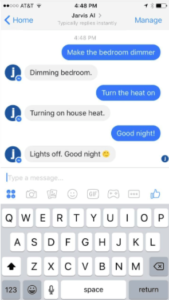

User interfaces are different systems (Messenger, iOS Voice and Door Camera) that let Mark interface with Jarvis. First, Whenever Zuckerberg interacts with one of them (e.g. send a message to the Jarvis Messenger Bot or says a command using iOS Voice), the user interfaces tell Jarvis (through the API) to do something in the home.

These user interface APIs are the way the user interacts with the AI and they connect to the AI (Jarvis) through an API.

2) AI System APIs: Let Jarvis Interpret the Command

AI system APIs help Jarvis make sense of the commands passed through the user interfaces. When Mark talks to Jarvis, he does so in a more human than computer-focused way. For example, he talks to the iOS Voice App to speak commands vs. typing them in an interface. He also talks to Jarvis using more broad and slang terms. In his post, he tells his new robo-butler to “shoot me a new gray t-shirt,” rather than something more computer friendly.

Jarvis, however, needs to translate these more human requests into computer commands. “Shoot me a gray t-shirt” has to be understood by the AI and broken down into action ( ex. load t-shirt cannon, fire t-shirt cannon). This translation process occurs through AI services like Speech Recognition (which translates Mark’s speech to text) and Language Processing (which extract operations and intents out of the text).

These AI systems are the backbone of Jarvis, and are consumed through APIs. In the past year, we have seen many new AI companies systems grow and gather more attention. Facebook itself acquired Wit.ai, which falls under the Language Processing bucket (more precisely, it lets bots take human sentences and understand user intent). Similarily, Google acquired a Language Processing API (and Wit.ai competitor) called API.ai. Speech Recognition systems are also gaining popularity, with both Google and Microsoft providing them to developers through public APIs.

3) Home Systems/Data APIs: Enable Jarvis to Take Action

So far, the user interfaces let Jarvis get commands and the AI systems let it interpret them. But, without more functionality, Jarvis cannot actually do anything with those commands. That’s where home systems and data APIs come in. Home systems allow developers to connect to smart home devices (like bulbs, locks and thermostats) through an API. Data APIs allow developers to connect and retrieve data from an outside program (ex. getting a song from Spotify).

Home systems and data APIs translate Mark’s commands into action.

Home systems and data APIs translate Mark’s commands into action. For example, here he is turning on and off the lights (thanks, Fast Company!).

When Jarvis wants to make it warmer, it can use the thermostat API to turn the heat up. When Mark wants to have an impromptu dance party with his son Max, Jarvis can connect to the Spotify API and play a song with a simple verbal command.

AI is All about APIs

In his post, Mark proved that the future of AI and AI-driven assistants is all about APIs. Interface APIs let them get commands from the users. AI APIs let Jarvis interpret those commands and understand what they mean. Home systems APIs let Jarvis actually take action.

It’s not just Jarvis that runs on APIs. In fact, any AI I can think of works that way.

Take Siri for example. Siri uses the iOS API to get a user’s voice commands. Siri then uses a (presumably proprietary) AI to convert that speech into text and the text into meaning. And lastly, Siri uses multiple APIs to return data to the user. For example, Siri may use…

- The IMDB API to return movie times and showings

- The Wolfram Alpha API to solve mathematical questions

- The Yahoo! API to share the results of last night’s game

- The Maps API to give directions

And many, many more…

In this example, Siri acts just like Jarvis. It uses three types of APIs to interface with, interpret and execute user commands.

More generally, we can model all AI into that system: user interfaces APIs > AI APIs > Home systems / data APIs.

This model shows that AIs will only be as good as the APIs they use. The better the APIs and the more data and actions they let AI take – the better AIs will be.

This post was cross-posted on Medium.

How do you get the voice of Jarvis ?